The Key to Launching Sustainable Technology

We are in the midst of a global crisis with the need to reduce carbon across all industries in order to limit global warming to 1.5 deg C above pre-industrial levels, as established in The Paris Agreement[1]. This drives a need for breakthrough technologies across all industries that can both reduce carbon and create value. We can draw on past experience to reduce the time, cost and risk of technology scaleup, through some guidelines and practices that are the key to Practical Technology Scaleup. This increases the chance of success for individual technologies and will enable us as a society to meet these aggressive climate targets.

I have had the chance to scale-up and launch new products and technologies across a range of industries including sustainable fuels, renewable chemicals, bioprocessing, petrochemicals, specialty chemicals, distillation, and catalysis, and in my 23 years of industrial experience have developed a series of rules and guidelines to scaling and launching new technology. The challenge with each has been to:

· reduce technology risk

· reduce time to market

· reduce cost

· maximize value

These are often competing objectives, and usually reducing time to market and reducing risk win out. Of course, if the capital and operating cost are too high then we will not be successful, so we cannot ignore these criteria either.

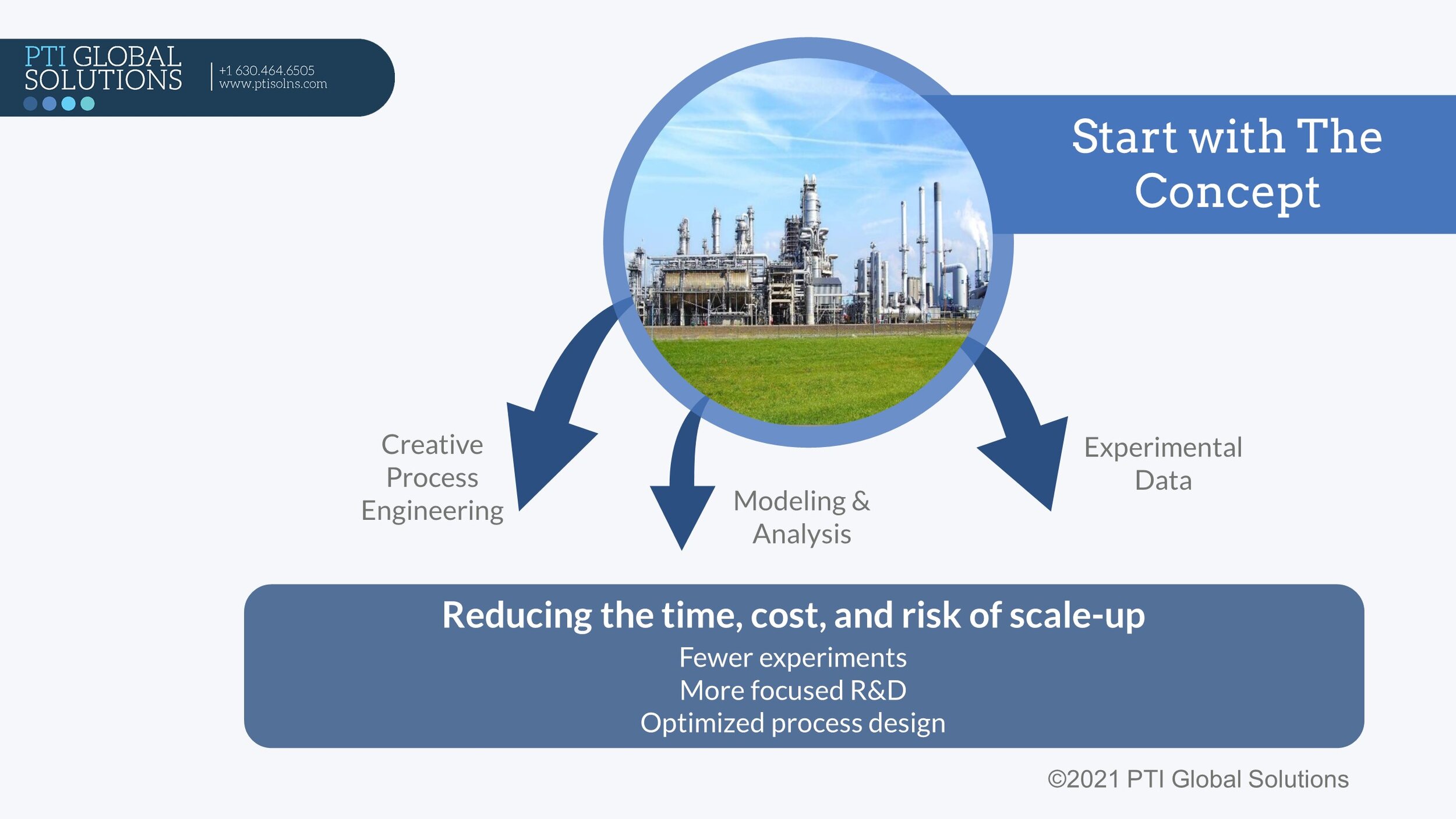

It is critical to ‘start with the end in mind’ using a Technology Concept (or Process Concept) that is used as a framework to drive new technology development, scale-up and commercialization. This technology concept is not set in stone, and, in fact, should be reviewed and updated as we progress throughout the scale-up effort. We establish the technology concept to drive the scale-up effort, not just inform it. This then enables us to direct the innovation to create the greatest value from breakthrough and disruptive ideas, as we identify challenges early, fail fast when it is cheaper and quicker, and make sure our efforts are focused on solving commercially relevant problems.

The technology concept is developed, and iteratively revised, through a combination of Creative Process Engineering, Multi-scale Experimental Data, and Modeling and Analysis.

Creative Process Engineering: The flow scheme is developed, the material balance is estimated, and key process design decisions are identified so that we can establish the best process flowsheet for the technology.

Modeling & Analysis: A good model can save time and $$ in the lab. Coupled with the right analysis, this can be used to prioritize objectives in the lab, pilot, and demo units. Cautionary note--useful models are more important than perfect models!

Experimental Data: We need the right data to prove out breakthrough ideas, secure partners and investors, and develop engineering data for equipment design. Mutli-scale data is critical to this effort, and with good planning, multiple assets and external resources can be leveraged.

The key benefits to this approach are:

• Prioritization of R&D to de-risk and optimize a new technology.

• Identification of cost reduction opportunities throughout the scale-up effort.

• Anticipation of engineering needs as early as possible.

In this way we can reduce risk and optimize economics of our new design, while efficiently managing the time and cost of our efforts.

In future posts I will elaborate on the key concepts presented in this introduction.

[1] https://unfccc.int/process-and-meetings/the-paris-agreement/the-paris-agreement